Reviewing Progress on Responsible AI Diffusion

India's Charter for the Democratic Diffusion of AI reframes a critical question: how should transformative technology move across borders? What the charter gets right—and what it still needs to build.

Summary

This piece reviews previous approaches to diffusion including India's AI Charter, which reframes diffusion as a development challenge focused on building local capacity and sovereignty. It contrasts this with earlier diffusion logics—open science, security-based export controls, and vendor-led commercial expansion—arguing that none adequately built absorptive capacity in the Global South.

Definitions

- Responsible AI: AI systems designed, deployed, and governed to be safe, ethical, fair, and accountable, considering social, economic, and environmental impacts.

- Diffusion: The process through which AI technologies, tools, or knowledge spread across organizations, communities, or countries, including both access and practical adoption.

Responsible AI Diffusion: Reviewing India's Charter Against Global Practice

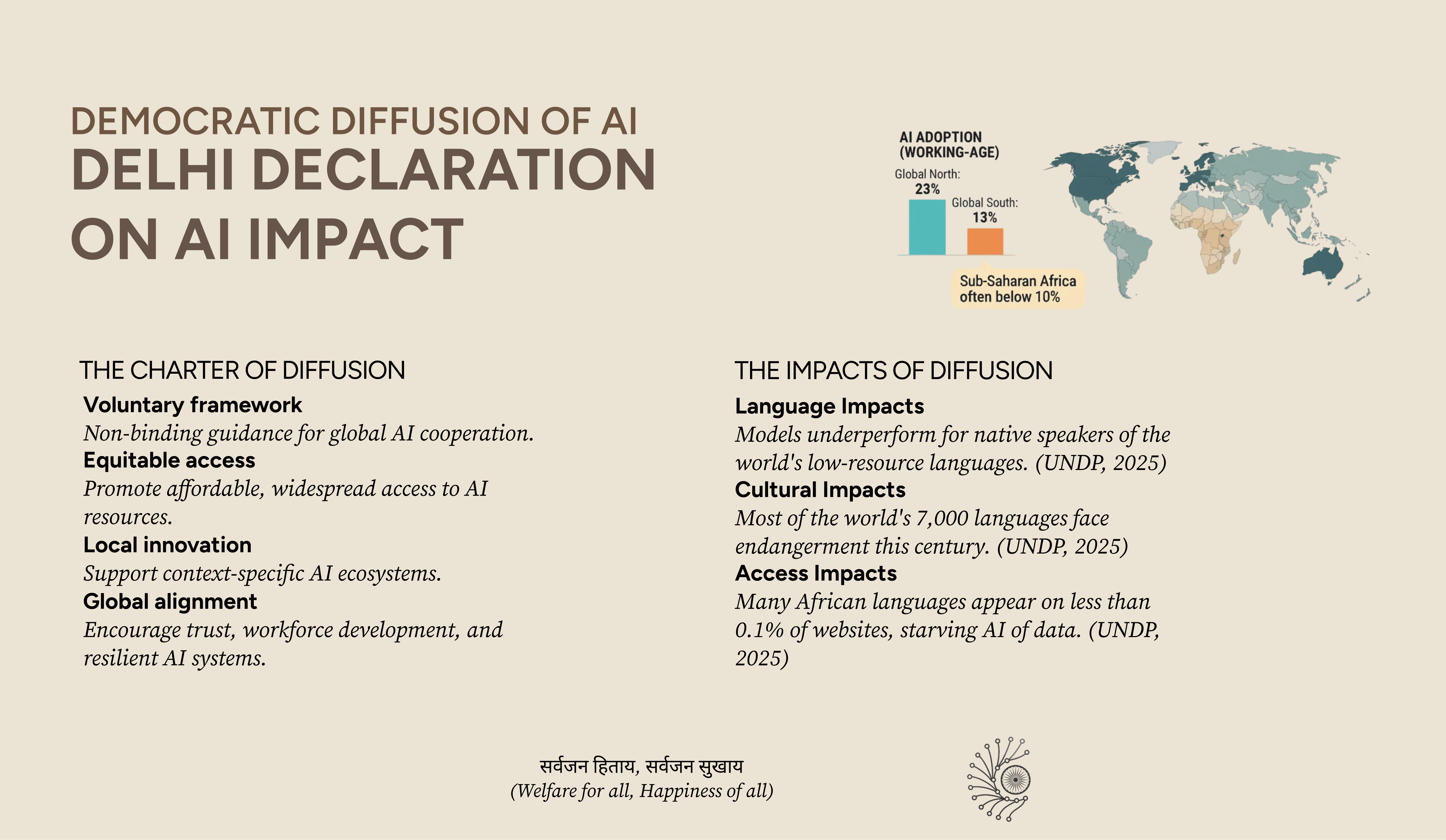

AI is spreading quickly around the world, but not always in ways that help people or communities. Some countries focus on control and security, others on building local capabilities, and many have little say in how AI reaches them. Thinking carefully about how AI moves and is used matters for fairness, safety, and development.

Since 2022, different approaches have shaped AI diffusion, often emphasizing control, capability-building, or market expansion. While these efforts spread AI technologies, they did not fully support locally relevant, responsible, or accountable use. How should AI systems move across contexts in ways that benefit people? This question has guided discussions about AI diffusion for years. Research on AI deployment and development suggests that diffusion should be treated primarily as a development challenge, rather than only a security or market issue. This shifts the focus from "who gains access?" to "who develops the capacity to use access responsibly and effectively?"

Three Logics of AI Diffusion (2018–2026)

Technology does not diffuse uniformly. Different mechanisms carry it across borders, and each mechanism produces fundamentally different outcomes for recipients. Understanding these three logics—often operating simultaneously rather than sequentially—reveals what India's charter is attempting to displace and why.

Different narratives of diffusion have been experimented with. The trajectory includes open science norms, security gatekeeping, and commercial conditional access—each producing different constraints on autonomous development.

Open dissemination by default

AI research and tools circulate freely via academic publication, open-source repositories, and commercial GPU markets. No formal export controls on compute or model weights. Access follows scientific and market norms. The global research ecosystem operates on principles of academic freedom and open collaboration.

First export controls on advanced chips

The U.S. Department of Commerce restricts exports of Nvidia's most advanced GPUs to other countries, citing national security concerns. This marks the first explicit attempt to weaponize compute as a geopolitical asset. The framing shifts from "open science benefits everyone" to "advanced compute is a strategic asset requiring control."

Controls expand progressively

Export restrictions broaden to cover more chip architectures, additional countries, and eventually certain closed AI model weights. The U.S. initiates restrictions on exports of advanced Nvidia chips and other semiconductors to China under the Department of Commerce, with controls progressively broadening to cover wider sets of chip architectures and countries. Compute becomes explicitly weaponized as a strategic asset in U.S. foreign policy.

Biden-Harris AI Diffusion Rule

The Bureau of Industry and Security formalizes the export control logic into a comprehensive regulatory framework. Advanced AI exports are gated by national security assessments, country tiers, and licensing requirements covering both semiconductors and certain closed AI model weights. The Global South faces structural exclusion from unrestricted access despite the rule's stated goal of "responsible diffusion."

Rule rescinded, controls remain

The Department of Commerce rescinds the Biden-era rule citing burden and diplomatic consequences. Export controls on advanced chips remain active. New guidance tightens restrictions on offshore chip use. A replacement framework is signaled but not yet published.

Trump administration: allies-first model

Policy shifts toward preferential access for allied states and tighter restrictions on adversary states. Location verification proposals emerge to prevent restricted chips from reaching prohibited jurisdictions after point of sale. Industry voices warn of competitiveness risk. The mechanism shifts from security-filtered to commercially conditional, with geopolitical alignment as the primary filter.

Diffusion Charter Finalized

The Charter for the Democratic Diffusion of AI is endorsed by 20 countries and 4 international organizations, representing the first major Global South-led alternative to security-framed diffusion models. The charter explicitly rejects binding obligations in favor of voluntary, adaptive coordination.

Diffusion 1.0: Open Diffusion (2018–2021)

In the early phase of AI diffusion (roughly 2018–2021), much of the work on models, datasets, and tools was shared through open scientific and engineering channels rather than through formal government controls. Research papers were routinely posted on preprint servers like arXiv, and many important models and codebases were released openly by research groups and community teams.

Open‑source releases of foundation models and tools helped lower barriers to entry for a broader set of developers and researchers. For example, community efforts to release accessible language models are surveyed in Open and Efficient Foundation Language Models, which discusses practices that were already emerging in this period.

Scholarship has also examined the broader ecosystem effects of open‑sourcing powerful models, noting both opportunities and risks associated with wide dissemination, as in On the Opportunities and Risks of Foundation Models.

At the same time, the hardware needed to train and run advanced AI—such as GPUs—was commercially available from vendors without the kinds of export controls that became prominent later. Access to these resources, however, required substantial capital, computing infrastructure, and technical expertise, meaning that actual competitive development remained concentrated in organizations with existing capacity.

Diffusion 2.0: Security-Filtered Diffusion (2022–2025)

As frontier AI capabilities advanced and geopolitical competition intensified, governments began treating advanced compute as a strategic asset. The United States initiated this shift through semiconductor export controls targeting China. Those controls broadened progressively to cover wider sets of chip architectures and countries, with implications that extended far beyond U.S.-China bilateral relations.

Tier A:

Full diffusion with no conditionality. Infrastructure, models, knowledge transfer, research participation. For countries with institutional scaffolding in place.Tier B:

Conditional diffusion with embedded institutional transfer. Technology coupled to mandatory training, phased release schedules, joint governance structures, clear graduation pathways. For countries building capacity.Tier C:

Restricted diffusion at application layer only. Model weights remain with source institution. Support focused on capacity development. For countries without foundational governance or technical infrastructure.Critically, tiers are temporary and developmental. A Tier B country should have clarity about what institutional development is required to move to Tier A. That clarity transforms diffusion from perpetual dependency into a defined development intervention with an endpoint. This represented a fundamental break from the open diffusion model. The logic was straightforward: if advanced AI capabilities confer strategic advantage, then access to the computation required to develop those capabilities should be controlled by those nations seeking strategic advantage.

Control diffusion by controlling compute. This logic produced a tiered world: countries assessed as security allies received unrestricted access; countries assessed as competitors faced restrictions; countries assessed as lower-risk neutral states occupied ambiguous middle positions.

In January 2025, the Biden-Harris administration crystallized this logic into a comprehensive framework. The AI Diffusion Rule, issued by the Bureau of Industry and Security, created a tiered licensing system for advanced semiconductors and certain closed model weights, with access determined by national security assessments and country-risk evaluations. Countries assessed as higher-risk, or lacking sufficient security infrastructure, faced restrictions. The framework attempted to balance U.S. leadership in AI with measured global diffusion—a tension that would prove irreconcilable.

The framework had two structural limitations:

First, it addressed security eligibility rather than absorptive capacity. Access to advanced AI or chip technology does not automatically translate into local benefit. Without sufficient human capital, governance structures, and economic sustainability, receiving the technology alone cannot generate effective deployment or development outcomes. This was not a "failure"—the rule was designed for security control, not development—but it created collateral damage to legitimate capacity-building pathways.

Second, it failed to address how diffusion actually happens. AI capability spreads through research collaborations, open-source releases, and inter-firm knowledge transfers—channels that operate through entirely different mechanisms. A tiered licensing regime does nothing to shape those pathways, meaning diffusion continued through channels the rule did not address.

By May 2025, the administration rescinded the rule, but export controls on advanced semiconductors remained in place, along with new guidance restricting offshore use. The episode illustrates the ongoing tension between controlling access to sensitive technologies and enabling their use in international partnerships.

Diffusion 3.0: Market Diffusion (2025–2026)

This model emphasized preferential access for certain partners, with restrictions applied to other recipients. Initiatives such as the Stargate project and select regional technology partnerships provided full-stack AI infrastructure packages—including chips, cloud services, and pretrained models—to approved partners.

Under this approach, recipients operated externally developed AI systems rather than developing all components domestically. As a result, the infrastructure and underlying technology stack—semiconductors, cloud platforms, model weights, and datasets—remained managed by the original vendors.

While providing access to advanced AI, the Stargate model maintains dependency by controlling every layer of the technology stack, leaving recipients unable to develop autonomous capacity.

The Stargate

This can lead to access to cutting-edge technology coupled with structural inability to integrate it into domestic institutional systems. The recipient remains perpetually dependent on the vendor for operation, interpretation, and adaptation.

Diffusion 4.0: Social, Institutional, and Power Dimensions

Diffusion 4.0 must address the limitations of prior frameworks by focusing on capacity, governance, and power distribution. Access alone does not create sustainable or equitable outcomes.

-

Human Capital & Skills

Beneficial AI deployment requires trained engineers, domain researchers, and regulators who can evaluate models in local contexts. Skills gaps limit effective use, no matter how widely models are shared. -

Governance & Institutional Capacity

Effective diffusion demands enforceable data protection, sectoral regulations, audit infrastructure, and accountability mechanisms. Principles without power to enforce remain aspirational. -

Economic Sustainability & Research Networks

Long-term adoption requires funding models and collaborative research networks that integrate lower-capacity actors, moving them from consumers to participants in global innovation. -

Power Sharing & Middle-Power Roles

Diffusion is not neutral: actors with more resources and influence naturally dominate Belfer Center. Middle Powers Program. Power Sharing approaches ensure that medium-capacity actors—states, institutions, or regional consortia—participate in decision-making, platform governance, and resource allocation. This balances asymmetries, prevents lock-in, and strengthens collective bargaining in AI diffusion. Absorptive Capacity in AI

India's Intervention: What the Charter Actually Proposes

On February 19, 2026, at the India AI Impact Summit in New Delhi, the Democratizing AI Resources Working Group released the Charter for the Democratic Diffusion of AI. The charter was endorsed by 20 countries (Australia, Brazil, Cambodia, Canada, Colombia, Egypt, Ethiopia, France, Iceland, Italy, Japan, Mauritius, Mexico, Mozambique, Nigeria, Norway, Philippines, Serbia, Switzerland, Tanzania) and 4 international organizations including the Asian Development Bank, World Bank, Gates Foundation, and Rockefeller Foundation.

Notably, India—the host and MAITRI platform developer—is not listed among the endorsers. This may reflect diplomatic protocol (hosts signing last), strategic ambiguity, or the document's early status predating final Indian endorsement.

The charter centers on a thesis: diffusion is meaningful only insofar as it builds sovereign, autonomous capacity to develop and deploy AI beneficially. This is not incremental. It is a fundamental reframing.

The Seven Principles: Reading Critically

The charter articulates seven principles intended to guide democratization. But principles in policy documents reveal as much through what they avoid as what they assert. Consider each carefully:

The Seven Principles

Reading Critically

Principle 1: Enhance Digital Inclusion & Connectivity

Commits to reliable digital infrastructure and affordable connectivity, but funding and enforcement are unspecified.Principle 2: Support Representative AI Development

Strengthen low-resource language datasets and regional AI ecosystems, but responsibility and funding are undefined.Principle 3: Support Pooled AI Infrastructure

Promotes voluntary regional compute hubs, but asymmetric power and incentives are ignored.Principle 4: Encourage Openness & Trustworthiness

Openness is conditional on safeguards and trustworthiness, but standards and enforcement are undefined.Principle 5: Inclusive & Equitable Benefit-Sharing

Promises equitable distribution of AI benefits, but "equity" is undefined and unoperationalized.Principle 6: AI Capacity & Talent Development

Supports AI literacy and skills pipelines, but does not address brain drain or economic incentives to stay.Principle 7: Innovative Financing Assistance

Encourages blended financing and public-private partnerships, but only "considers" rather than commits.The Five Objectives: Where the Charter Becomes Concrete

The charter operationalizes these principles through five specific objectives. These are more concrete than the principles, but still face critical gaps:

The Five Objectives: Where the Charter Becomes Concrete

Objective 1: Build Resilient & Equitable AI Capacity

Focuses on resilience rather than mere access, but threats and mechanisms are unspecified.Objective 2: Enhance Access & Affordability of Compute

Technical focus on efficiency and transaction costs, but structural scarcity remains unaddressed.Objective 3: Enable AI-Ready Data Policies

Promotes inclusive datasets, but data governance, ownership, and community consultation are absent.Objective 4: Catalyze Inclusive AI Development

Supports small, domain-specific, public-interest AI, but economic sustainability and funding remain unclear.Objective 5: Expand Use & Scaling of AI Applications

Conditions access on responsible deployment, but responsibility standards and accountability are undefined.The Implementation Platform and Its Structural Constraints (MAITRI)

MAITRI is envisaged as a federated, enabling platform designed to complement existing national, regional, and multilateral initiatives. The platform will enable accessibility of existing AI compute capacity in private and public sectors, facilitate price transparency and discoverability, and improve access and affordability for end users.

The platform encompasses three functional areas as defined in the charter:

Countries may link national or regional compute discovery platforms to MAITRI or list available resources with defined access conditions. Providers may offer compute credits, creating a transparent marketplace focused on startups and academic researchers.

MAITRI hosts multilingual and multicultural datasets in compliance with applicable national data protection laws. Contributors retain ownership while enabling shared access. Governance questions remain: who decides which datasets are hosted, enforces compliance, and resolves disputes about responsible stewardship?

The platform coordinates collaboration on small language, domain, and region-specific models. Entities may host models, share training insights, and evaluation benchmarks voluntarily, based on mutual priorities and agreed terms.

The charter proposes implementation through MAITRI (Multistakeholder AI for Trusted & Resilient Infrastructure), a platform to be developed as a Digital Public Good by the Government of India. This is where the charter's architectural choices become most apparent—and most debated.

The charter's repeated emphasis on "voluntary," "non-binding," and "mutually agreed terms" is not oversight—it is design. The drafters explicitly rejected the Biden-era security framework's coercive tiering in favor of soft coordination.

What incentive exists for a country that has developed a successful domain-specific model to share it with competitors? Knowledge sharing requires institutional cultures and incentive structures that do not yet exist. The charter assumes what it should be building.

Democratization vs Diffusion: Why the Distinction Matters

Democratization and diffusion differ in purpose, mechanism, and outcome when discussing how AI spreads and how its benefits are shared.

Diffusion focuses on how technology moves from one context to another—how systems, tools, or knowledge are adopted and used across different organizations, communities, or countries. It does not intrinsically carry a value judgment about who benefits or how. Technology diffusion policy. Democratization, by contrast, is often used in discussions of AI and other technologies to mean making access, use, or governance more broadly inclusive. In the context of AI, multiple distinct notions of democratization have been articulated: extending access to use, widening participation in development, distributing economic benefits more equitably, or opening up governance and decision‑making. These aims do not always align and can even conflict—for example, wider access to powerful models may run against certain governance goals. Democratization

While reducing vendor lock-in and avoiding dependence on unaccountable, privately managed platforms are legitimate goals, "sovereignty" alone does not guarantee that AI systems serve the public interest. Reboot Democracy

Research on AI democratization emphasizes that lowering access barriers alone does not ensure that communities' needs shape how the technology is developed, deployed, or governed. Democratization is not just about how widely technology spreads—it is about who makes decisions and whose interests are served Democratization. Simply making AI widely available does not guarantee that all users can apply it safely or effectively. True democratization requires attention to participation, governance, and how power is distributed, not just adoption.

Charter Constraints: Voluntary by Design

The charter emphasizes collaborative governance and flexible funding to support capacity building.

Capacity building in dozens of developing countries requires sustained resources: training, compute, data, research partnerships, and governance structures. The charter's reliance on voluntary or blended financing is aspirational. Past experience suggests that optional contributions are rarely sufficient to maintain shared infrastructure or long-term programs.

Similarly, the charter outlines principles for progress review and knowledge sharing. These are voluntary and lack enforcement mechanisms. Without authority to ensure follow-through, commitments risk remaining statements of intent rather than actionable obligations.

This gap is not unusual for international frameworks, but it highlights the difference between a vision and the operational capacity to make it real. The charter's voluntary architecture is a feature, not a bug—it represents a bet that soft coordination can succeed where hard regulation failed. This bet requires testing against historical evidence that voluntary technology transfer mechanisms rarely achieve scale without market incentives or institutional enforcement.

What Responsible Diffusion Actually Requires

Setting aside what the charter proposes, what would responsible AI diffusion require operationally? This is where we can move beyond critique toward construction.

Prerequisites for Responsible Diffusion

Responsible diffusion must include iterative assessment across four domains, recognizing that capacity builds through engagement rather than preceding it:

| Domain | What It Measures | Why It Matters |

|---|---|---|

| Technical Capacity | Fine-tuning capability, evaluation tooling, incident response infrastructure, access to compute | Without this, diffusion produces operation of foreign systems, not indigenous capability |

| Governance Capacity | Data protection enforcement, sectoral regulation maturity, liability frameworks, audit infrastructure | Without this, sovereignty rights are theoretical rather than practical |

| Economic Sustainability | Operational funding mechanisms, local value capture structures, maintenance budgets | Without this, diffusion creates dependency the moment donor support ends |

| Human Capital | Domestic ML engineering pipelines, talent retention structures, public sector AI literacy | Without this, every technical and governance capacity degrades over time as people leave |

These metrics are harder to track than compliance metrics. They require longitudinal monitoring and independent evaluation. They are the only metrics that tell you whether diffusion is working.

The Strategic Window and What It Reveals

The current landscape of AI diffusion shows competing approaches, each with trade-offs in commercial viability, governance, and adoption. There is a temporary window in which leverage exists, as multiple technology sources and governance models are available for consideration.

A genuinely alternative approach would focus on institutional development, collective action, and long-term capacity building, rather than reliance on a single dominant model.

Operationalizing this requires three strategic steps—though these may require instruments beyond the charter's voluntary framework:

-

First, resource commitments That means commons infrastructure, regional compute consortia, and domestic funding that prevents compute monopolization—moving beyond voluntary contributions to sustained investment.

-

Second, integration with existing development institutions. Regional development banks, existing scientific networks: these have resources and legitimacy that new platforms lack. The charter could become the organizing framework for how development finance, research collaboration, and technology transfer are restructured—but this requires binding commitments from those institutions, not just opt-in participation.

-

Third, strategic deployment of actual leverage. Developing countries organizing around charter principles create that negotiating power—but only if they maintain coordination through the charter's review mechanisms and resist bilateral side deals that undermine collective bargaining.

Conclusion: The Charter as Diagnostic Document

The Charter for the Democratic Diffusion of AI is significant not primarily because of what it proposes—though the reframing around absorptive capacity is intellectually important—but because of what it reveals about the limits of prior frameworks.

Security-filtered diffusion failed because security clearance has nothing to do with absorptive capacity. Market diffusion fails because it prevents the institutional transfer required for autonomy. Open diffusion failed because it assumed capacity existed when structural constraints made it impossible.

The Charter for the Democratic Diffusion of AI represents the most significant diplomatic reframing of AI governance since the export control era began. As a voluntary framework, it prioritizes inclusion over enforcement—an architectural choice with predictable limitations. Its importance lies not in operational capacity it currently possesses, but in the alternative vision it articulates: diffusion as development intervention rather than security threat or market opportunity. Whether that vision translates into structural change depends on whether endorsers treat it as a binding commitment or a diplomatic gesture.

The charter identifies these failures. It proposes principles oriented toward capacity building. But it does not yet operationalize those principles in ways that would actually overcome the constraints—nor was it designed to. When U.S. or Chinese officials offer AI technology access, the developing world should ask: Does this build autonomous capacity or create dependency? Are we developing human capital or just operating foreign systems? Can we audit and intervene, or are we locked into vendor relationships? Is this participation in governance or consumption of others' rules?

These are the questions the charter raises. The work of answering them—with resource commitments, institutional redesign, and accountability mechanisms that may require going beyond the charter's voluntary architecture—is just beginning. The charter shows what that alternative could look like. The strategic question for the Global South is whether it will actually build it. That work is not the charter's to do. It is the Global South's.

References

Charter Documents

Policy and Regulatory Documents

-

U.S. Department of Commerce, Bureau of Industry and Security. (2025). "Framework for Artificial Intelligence Diffusion." Tiered licensing system for advanced semiconductors and closed model weights, effective January 2025. Rescinded May 2025 but export controls remain active.

-

U.S. Department of Commerce. Export Controls on Advanced Computing Items to China. Policy updates from 2022-2025.

Historical Context and Analysis

-

Chatham House. (2024). "Artificial Intelligence and the Challenge of Global Governance." Analysis of open science models in AI development, 2018-2021 period, and the transition to export controls.

-

Andersson Lipcsey, R. (2024). "AI Diffusion to Low-Middle Income Countries: A Blessing or a Curse?" arXiv preprint arXiv:2405.20399. Introduces concept of "absorptive capacity collapse" in technology transfer contexts.

-

OpenAI. (2025). "Announcing the Stargate Project." Describes domestic infrastructure buildout and international expansion to UAE, Norway, UK, and Argentina—sovereign partnerships distinct from technology transfer mechanisms.

Development and Capacity Building Framework

-

Government of India. (2024). IndiaAI Mission documentation. Demonstrates institutional transfer model combining compute procurement with policy infrastructure, safety research capacity, and inter-ministerial coordination.

-

African Union. (2024). AI Strategy and Governance Framework. Regional governance structures for AI oversight and capacity development.

-

ASEAN AI Governance Principles. Regional cooperation framework on AI standards and capacity building.

Industry Sources and News Analysis

-

Times of India. (2025). "Nvidia CEO Jensen Huang Praises Trump AI Policy." Reports on location verification proposals and industry concerns about competitiveness impacts of export controls.

-

Wilmer Hale. (2025). "U.S. Export Controls on AI Diffusion: Analysis of Recent Developments." Client Alert examining the Biden AI Diffusion Rule and subsequent policy shifts.

Additional References

-

World Bank. AI for Development Initiatives. Development finance frameworks relevant to technology transfer and capacity building.

-

Gates Foundation. AI for Social Good. Philanthropic funding approaches to AI diffusion in developing countries.

-

Rockefeller Foundation. Digital Opportunity Initiative. Infrastructure development and inclusive innovation frameworks.

Readers are encouraged to track: (1) MAITRI implementation decisions, particularly funding mechanisms and governance structures; (2) Whether India formally endorses the charter and under what conditions; (3) Regional AI governance initiatives that adopt or adapt charter principles; (4) Bilateral technology partnerships to assess whether major powers are actually negotiating differently; (5) Institutional developments in the Global South around AI capacity building and sovereignty safeguards.